Apple usually promotes the App Retailer as a significant promoting level for the iPhone and iPad. We’re assured it is secure to make use of as a result of we will place confidence in the purposes’ creators. Apple assures us that we could place confidence in the programmes themselves due to the evaluations left by different customers.

But, it would not appear to be the case.

The prevalence of phoney evaluations within the App Retailer was just lately addressed in a examine. That is horrible information for everybody concerned, however particularly the builders of the programmes we use regularly. However what about for us customers? To place it merely, it is a nightmare.

Critiques of accessible apps

Which? just lately carried out a analysis by which they analysed greater than 18,000 verified bogus evaluations present in app shops. Not simply the Apple App Retailer, but in addition the Google Play Market. As in comparison with Apple’s providing, Google’s was far poorer. reality be informed nonetheless, we won’t at all times depend on consumer suggestions in app shops.

That is a little bit of a difficulty, for the reason that evaluations on the App Retailer are sort of ineffective if we won’t depend on them.

Clearly, it is a sound speculation. Customers get programmes from the app retailer, check them out, after which fee them primarily based on their expertise. In any case, what may go fallacious?

The celebs will be gamed identical to every other system. or bought. True sufficient, that is what’s transpiring proper now.

The Which analysis states that in comparison with Tinder’s9.7%, “apps that includes paid-for evaluations had a significantly better fee of five-star evaluations.” Compared to Garmin’s 6%, 45.8% of reviewers for the well being app gave it 5 stars. It is a widespread tendency in any state of affairs involving manipulated evaluations.

Unscrupulous builders, predictably, aren’t paying for unfavourable suggestions. Good ones are what they’re buying. Among the many very most interesting. But that could be helpful in figuring out real evaluations from bogus ones. If you happen to purchase the most recent and biggest iPhone from the Apple Retailer, you’ll be able to hopefully keep away from downloading purposes with the biggest budgets for phoney evaluations.

The right way to Acknowledge a Faux Evaluation

Nonetheless, should you simply have a look at the star ranking, it is virtually exhausting to inform whether or not a assessment is phoney or not. There are, nonetheless, indicators that something is fallacious, so maintain an eye fixed out.

When deciding on a brand new app or recreation to obtain from the App Retailer, I bear in mind the next standards:

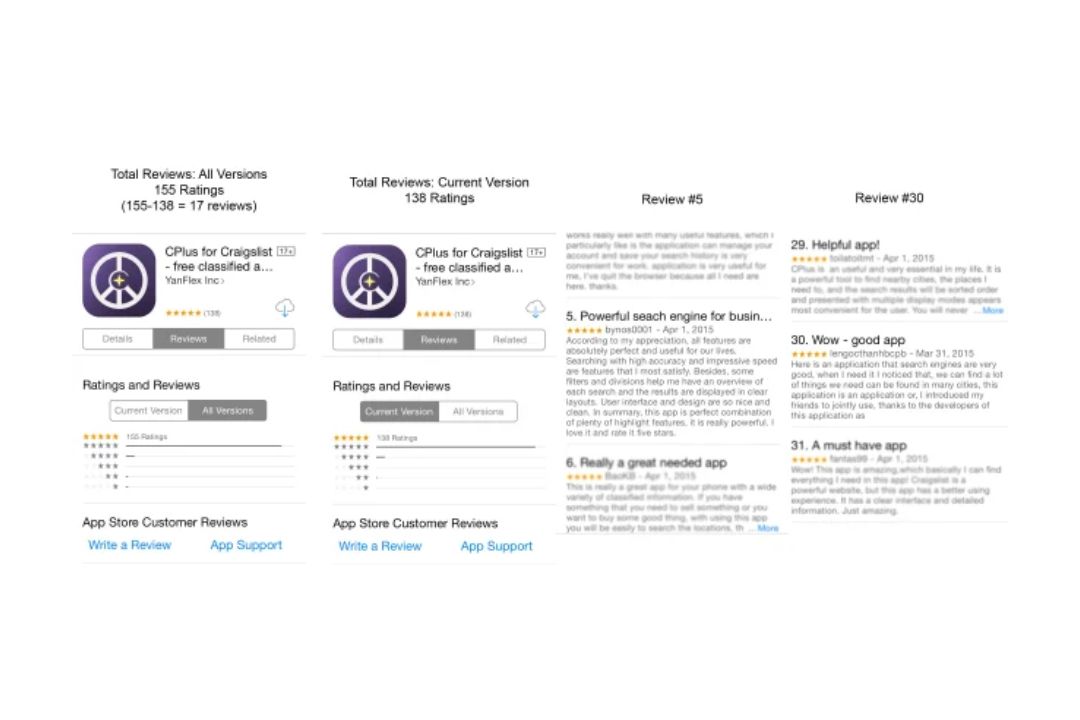

Have there been too many evaluations? False rankings and evaluations are likely to flood an app abruptly, reasonably than trickling in over time. Precise evaluations normally arrive over an extended size of time than 24 or 48 hours, until the app instantly turns into very fashionable.

There have been too many A+s, possibly. Regardless of how helpful or well-liked an app is, some people will at all times discover fault with it. Suspicions needs to be raised about any software program that receives overwhelmingly optimistic suggestions however surprisingly few complaints. It isn’t conclusive proof that something is fallacious, however reasonably one piece of proof amongst a number of.

The place do opinions stand? Some rankings are only a star system, positive. But, some people prefer to elaborate on their preferences concerning the aforementioned software program. These make a implausible promotional merchandise. Opinions that constantly get 5 stars however embrace completely no specifics are prone to be fabricated. If there are a number of evaluations printed in a brief period of time, it is possible that a lot of them will basically say the identical factor in several phrases.

Simply how good is the spelling? One other crimson flag is constantly misspelling the identical time period. Sellers of false evaluations typically embrace errors within the textual content of their merchandise in an effort to make them appear extra genuine. However, an abundance of them, notably when coupled with many evaluations in a brief time frame, may be a telltale signal that one thing is amiss. You must also be cautious of messages together with random emojis or unusual sequences of textual content like “I have been hooked by this app.”

Maintain this in thoughts earlier than you go on the offensive on social media, however none of those indicators by themselves show {that a} assessment is fake. Nonetheless, a lot of them working collectively for a similar app? It is potential that one thing is occurring.

How you could be of help

You could learn the evaluations of apps on the App Retailer, consider it or not. If you’re taking a look at a assessment that you simply consider is doubtful you could press and maintain on it after which choose the Report a priority button. Please assist out by filling out the shape and clicking “Submit.”

I want I may say that issues will change, however I simply do not see how bogus evaluations may ever be eradicated. However certainly there may be but hope?

Apple claims it has prevented entry to greater than 94 million evaluations and greater than 170 million rankings in 2021. It additionally acknowledged the elimination of an extra 610,000 rankings after receiving complaints from precise customers, suggesting the performance of the “report a priority” button.

Subtly charming pop culture geek. Amateur analyst. Freelance tv buff. Coffee lover